Why LangChain Needs CTO-Level Leadership to Reach Production

Helen Barkouskaya

.5 min read

.2 February, 2026

Head of Partnerships

.5 min read

.2 February, 2026

LangChain has become a leading framework for building applications on top of large language models, providing an approachable way to connect models, tools, and data. Yet while experimentation with LangChain is accessible, a persistent pattern has emerged: teams struggle to scale from prototype to reliable production system. Understanding why LangChain needs CTO-level leadership to bridge this gap is essential for teams that want real business impact.

Why LangChain PoCs Are Easy and Production Is Not

LangChain’s early promise is real. Developers can rapidly assemble prototypes that demonstrate impressive language capabilities with minimal code. The framework abstracts away much of the initial complexity of working with LLMs, making demos feel effortless.

Despite this apparent simplicity, the transition from PoC to full production reveals distinct challenges that require deep architectural thinking and organisational leadership throughout production, delivery, maintenance and scaling.

LangChain Production Readiness in 2026

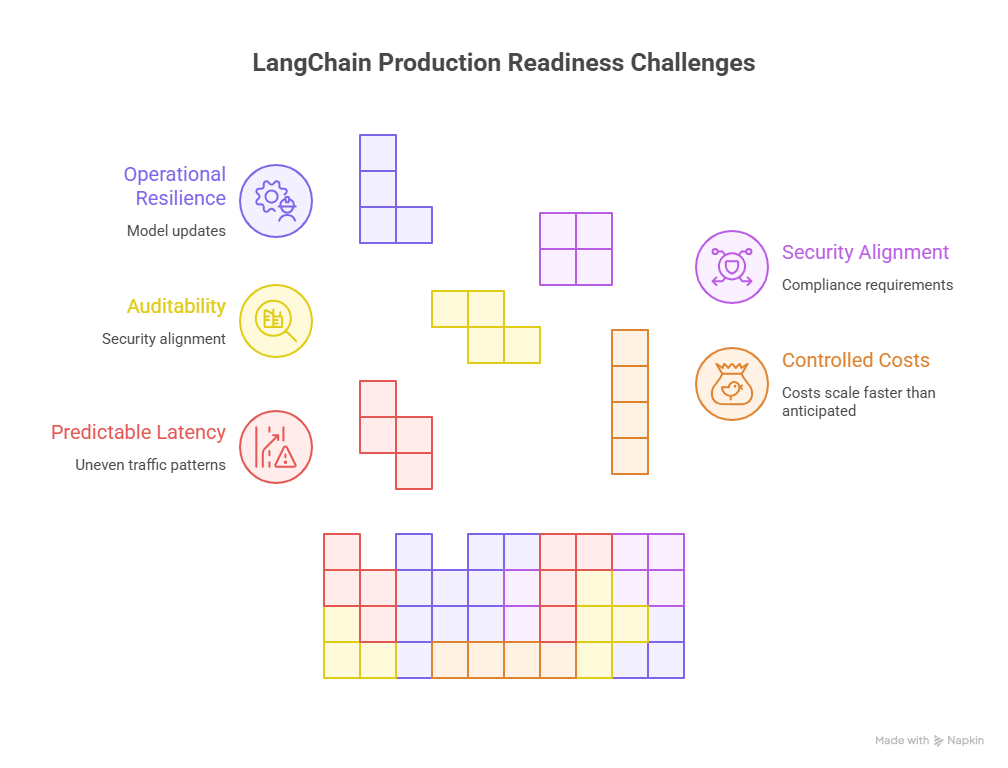

By 2026, expectations around production AI have shifted. Early experimentation with LangChain proved that teams could build compelling demos quickly. Today, the bar is much higher. Production readiness now implies predictable latency, controlled costs, auditability, security alignment, and operational resilience.

LangChain production readiness in 2026 is less about whether a workflow technically functions and more about whether it can survive sustained real-world usage. This includes handling uneven traffic patterns, evolving data sources, model updates, and compliance requirements without degrading user experience or business confidence.

Many teams discover that what worked in a prototype environment does not translate cleanly into production. Retrieval strategies that felt sufficient during testing begin to surface hallucinations. Agent chains that looked elegant in demos introduce cascading latency. Costs scale faster than anticipated. These gaps reveal that production readiness is not a feature you add at the end. It is a system-wide property that must be designed from the start.

This is where architectural discipline becomes essential, and where hidden technical decisions start to matter.

Key pillars of LangChain production readiness in 2026: user experience, operational resilience, security, compliance, and cost control.

Demos vs real users

Proofs of concept typically involve controlled inputs, limited users, and happy-path scenarios. They answer a basic question: Can this idea work at all? However, production systems must serve real users with diverse needs and unpredictable behaviours. Without the right leadership, teams discover that prototype assumptions do not hold under real-world load and variability.

Recent industry studies highlight the scale of this gap. A major MIT study found that only about 5% of generative AI pilot programs go on to deliver measurable business impact, with the remaining vast majority failing to integrate meaningfully into core operations.

Cost and latency explosion

LangChain’s modularity and flexibility can inadvertently introduce inefficiencies when chains and agents multiply calls to LLMs. Firms that scale these patterns quickly uncover escalating cost and latency pressures. In a survey of State of AI in Business 2025, organisations frequently cite a lack of infrastructure skills and architectural planning as key barriers to production readiness.

These pressures are not just academic; they affect customer experience, budget forecasts, and ultimately business confidence in AI.

LangChain Limitations and Risks in 2026: The Hidden Decisions That Break Production Systems

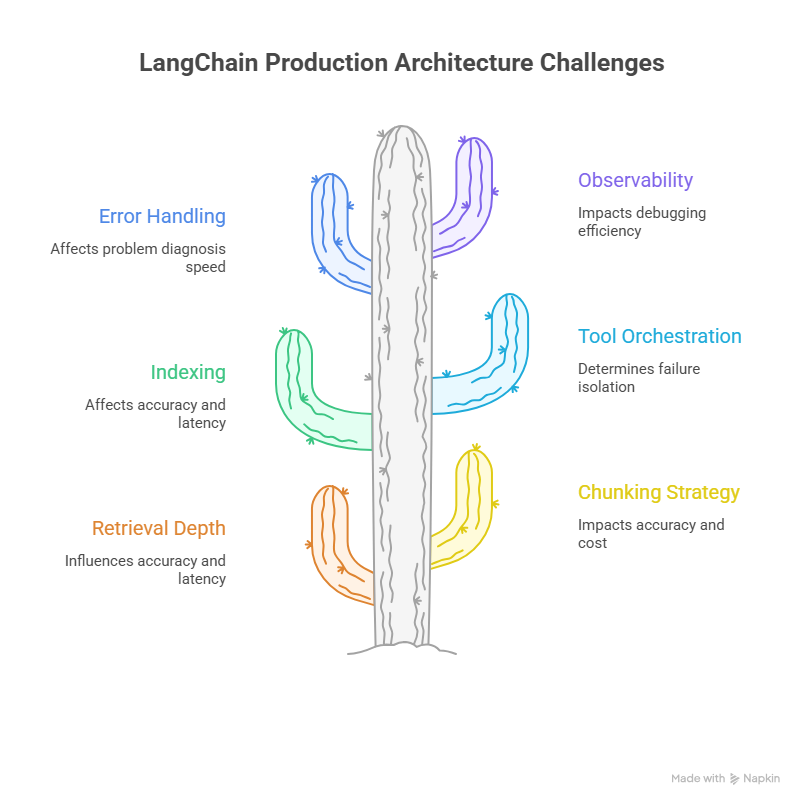

The core architectural decisions that most often break LangChain systems when scaling from prototype to production.

These choices collectively define LangChain production architecture, shaping how systems behave under real-world load, cost pressure, and operational risk. Several architectural choices in LangChain-powered systems appear straightforward in prototypes, but their implications become significant at scale. These are not simple implementation details. They are foundational system behaviours that shape reliability, cost, and correctness.

In 2026, LangChain’s flexibility remains one of its greatest strengths. It also introduces meaningful limitations and risks when systems are assembled without clear architectural ownership.

Several decisions that appear minor during prototyping become defining factors in production. Retrieval depth, chunking strategy, and indexing directly influence accuracy, latency, and cost. Tool orchestration patterns determine whether failures remain isolated or cascade across workflows. Error handling and observability decide whether teams can diagnose problems quickly or are left debugging by intuition.

These are not implementation details. They shape how a system behaves under load, how predictable operating costs become, and how reliably users receive correct answers.

LangChain itself is rarely the root cause of failure. The risk usually emerges from treating production systems as a collection of loosely connected components rather than as an integrated platform with explicit reliability, performance, and governance requirements. Without deliberate design, teams inherit silent fragility that only surfaces once real users arrive.

This naturally raises a broader question: who is responsible for making these tradeoffs coherent across the organisation?

Data retrieval and chunking

Retrieval-augmented generation is a core pattern in many LangChain applications. Decisions about data retrieval depth, chunking strategy, and index structure determine how much context the language model sees. Too little context increases hallucination risk, too much context increases cost and latency. Balancing these tradeoffs requires a nuanced understanding of both data and operational constraints.

Tool orchestration

LangChain’s strength comes from its ability to orchestrate models with external tools and APIs. In production, every tool interaction introduces potential failure modes, data dependencies, and performance considerations. These interactions must be formally architected rather than ad hoc, with orchestration that works in demos frequently becoming a source of cascading failures in live production systems.

Error handling and observability

Prototype systems often assume happy paths. In production, errors are inevitable. A system must gracefully handle timeouts, partial failures, and retries in ways that do not cascade into user-visible problems. Observability — the ability to understand, trace, and quantify why a system behaves as it does — becomes indispensable. Without structured logging and monitoring, teams wind up debugging by guesswork, undermining system stability.

So, the problem is rarely with LangChain itself. It is usually how it is used, treated like a composition of layers that require surrounding production infrastructure.

LangChain Leadership: Why Technical Success Requires Organisational Ownership

LangChain production challenges do not sit neatly inside a single team. They span backend engineering, data pipelines, infrastructure, security, product decisions, and financial planning. As a result, technical success depends on organisational leadership, not just developer effort.

LangChain leadership means establishing shared standards for architecture, defining acceptable cost and latency thresholds, and ensuring that production requirements shape implementation from the outset. It also means creating feedback loops between engineering and business stakeholders so that performance, risk, and budget remain aligned as systems evolve.

Without this ownership, teams optimise locally. Engineers focus on features, product teams chase functionality, and infrastructure reacts after problems appear. What’s missing is a unifying perspective that connects experimentation with operational reality.

That perspective typically lives at CTO level, where technical architecture and business outcomes intersect.

Why These Decisions Sit at CTO Level

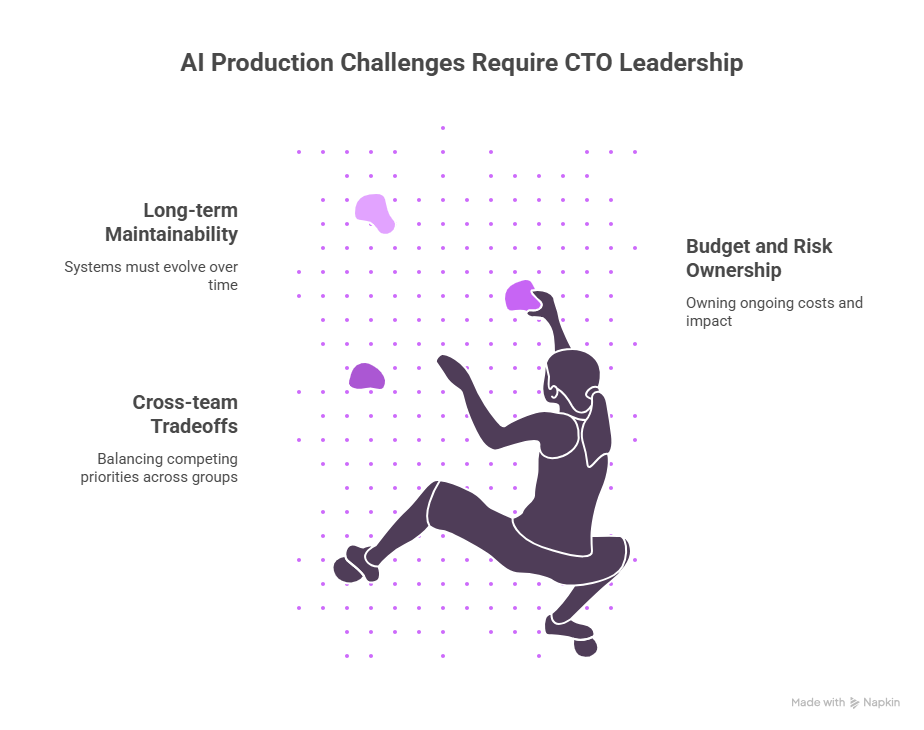

These challenges do not belong to a single discipline. They span engineering, data, infrastructure, security, product, and finance. As a result, they naturally sit at the level of CTO-level AI leadership.

AI production systems demand CTO-level ownership across maintainability, risk management, and cross-team alignment.

Cross-team tradeoffs

Production AI systems touch multiple parts of an organisation: backend engineering, data science, DevOps, product management, and security. CTO-level leaders are uniquely equipped to balance competing priorities across these groups, ensuring that architectural decisions align with both technical feasibility and strategic business goals.

Budget and risk ownership

Responsibility for AI production systems means owning ongoing costs, not one-time project budgets. When latency spikes or cost overruns occur, the impact is immediate and tangible.

Only leaders with a holistic view of both technical drivers and business outcomes can steward budgets effectively through these transitions.

Long-term maintainability

Reaching production is not the finish line. Systems must evolve as models, tools, and business requirements change. Sustainable LangChain architectures anticipate future scaling scenarios, enable safe iteration, and minimise long-term technical debt. This requires leadership that consistently bridges experimentation with disciplined delivery.

The Role of a Fractional AI CTO in LangChain Production

For many organisations, hiring a full-time AI-experienced CTO is impractical. A fractional AI CTO offers a focused and effective alternative, embedding CTO-level ownership precisely where it is needed.

For teams evaluating this model, it’s also important to understand how fractional AI CTOs differ from traditional AI consultants. We break this down in detail in Fractional AI CTO vs AI Consultant article, including what “hands-on LangChain delivery” really means in practice.

Architecture ownership

A fractional AI CTO can define, document, and enforce patterns for production-ready LangChain architecture. This includes choosing appropriate orchestration paradigms, establishing retrieval and chunking standards, and selecting tools that support long-term observability and resilience.

Delivery alignment

Connecting strategic goals with day-to-day delivery requires architecture owners to participate in sprint planning, review cycles, and operational readiness checks. A leader in this position ensures that production requirements shape implementation rather than being retrofitted.

Team enablement

Finally, production AI requires cross-functional fluency. Teams must be skilled not only in coding LangChain prototypes but also in writing tests, monitoring production behaviours, and responding to incidents. Leadership that enables and elevates these capabilities across teams reduces technical debt and improves reliability.

Summary

LangChain offers an accessible way to create powerful AI-driven workflows. However, moving from demonstrative prototypes to robust, scalable production systems brings a host of architectural and organisational challenges. Research shows that most AI initiatives fail to deliver meaningful results when they lack deep integration into workflows and strategic oversight.

These challenges involve tradeoffs that span cost, performance, observability, and long-term evolution. Given their breadth and impact, these decisions sit squarely within the domain of CTO-level AI leadership. By providing strategic alignment, architectural clarity, and cross-team coordination, a leader at this level can help LangChain systems mature beyond experimentation into dependable production applications.

Later in your maturity journey, a model such as a fractional AI CTO can deliver targeted leadership and architecture ownership to help navigate these transitions while aligning with broader organisational goals.