AI Platform Engineering

AI Platform Engineering

Turning Data Into Intelligence

Turning Data Into Intelligence

Intro

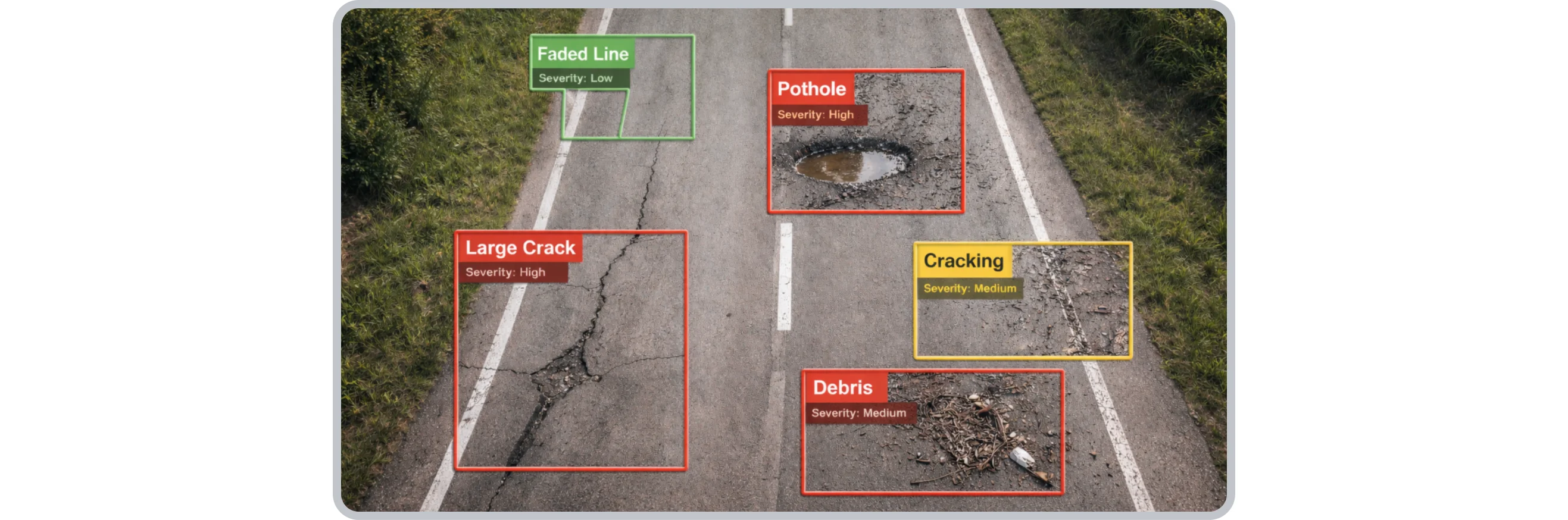

AI Platform for automated road condition assessment using computer vision

Shepherd Services operates road asset management systems that rely on large volumes of road imagery. Traditionally, identifying defects such as potholes, cracking, surface wear, and line fading required manual review or inconsistent interpretation.

Whitefox was engaged to build an AI system that:

Automatically detects road defects from captured imagery

Classifies each defect according to a domain-specific taxonomy

Measures defect magnitude and condition

Assigns a consistent, deterministic severity rating (1–5 scale)

Outputs structured, standardised data for integration into the RACAS® platform

We built a system that looks at road images, finds defects, evaluates how serious they are, and converts that into structured condition scores that engineers can use immediately.

The goal was not just detection, but repeatable, measurable, production-grade road condition intelligence at scale.

To deliver production-grade machine learning systems that convert unstructured data into structured, decision-ready intelligence, Whitefox built robust AI pipelines, validated model engines, deterministic scoring systems and cloud-native inference infrastructure, transforming raw data into trusted operational outputs.

The Challenge

Turning Raw Data into Production-Grade AI

Organisations often capture large quantities of high-value operational data (e.g., imagery, telemetry, logs, sensor streams) but struggle to translate it into actionable, consistent, real-world insight.

Common barriers to AI success include:

Unstructured data that cannot be automated

Generic detection models that lack domain alignment

Lack of deterministic scoring or business logic

Weak validation frameworks that don’t scale

Infrastructure that fails under production load

When Shepherd Services engaged Whitefox for the RACAS® platform, these challenges were evident: vast imagery datasets with no automated intelligence layer and no scalable way to standardise severity or condition outputs across tens of thousands of records.

Whitefox was brought in to transform this into a reliable, repeatable, production AI pipeline.

Our Approach

Engineering the Production AI Pipeline

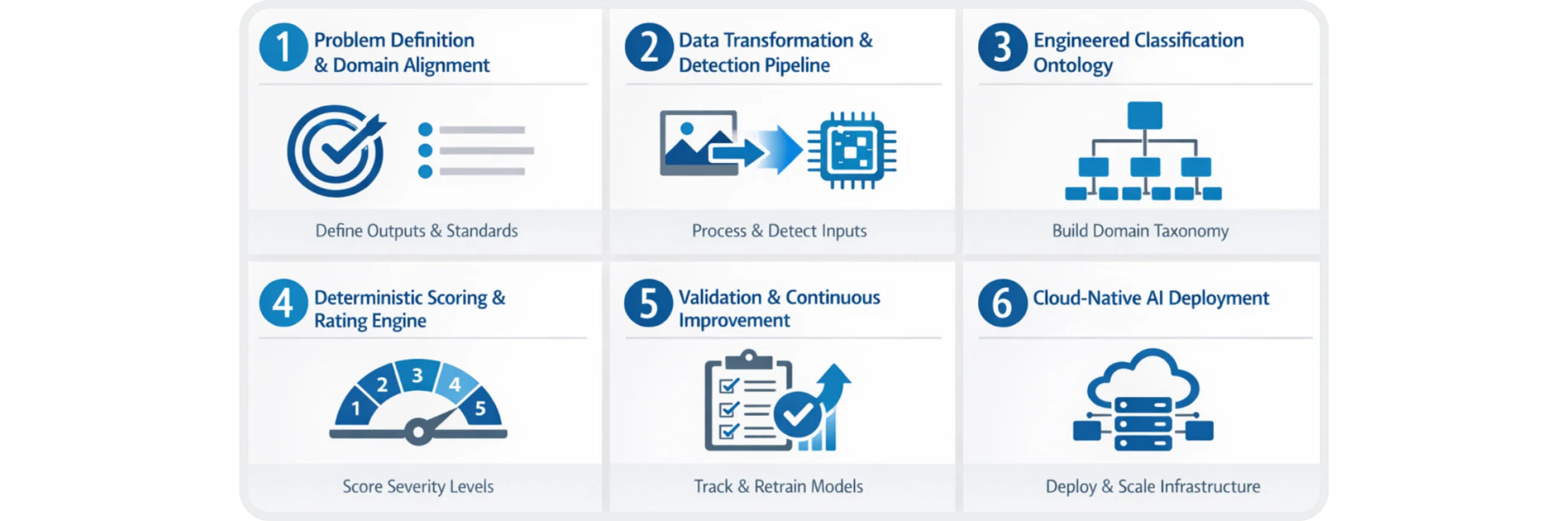

We apply a structured AI engineering discipline — combining machine learning, software infrastructure and operational rigour.

Step 1: Problem Definition & Domain Alignment

We worked closely with Shepherd’s product and technical teams to define:

What “useful output” looks like

What engineering standards to align to

What performance targets are required

What integration endpoints must be supported

This reframed the problem from “build a model” to “ship a production system.” We follow the intent-first mindset in building AI solutions, starting with what decisions users actually need to make, then engineering AI systems backwards from that intent.

Step 2: Data Transformation & Detection Pipeline

Raw unstructured inputs (e.g., imagery) were transformed into machine-readable formats using an automated AI pipeline:

Image preprocessing

Object detection architecture (YOLO format)

Bounding box generation

Confidence scoring and filtering

Structured export for downstream systems

This pipeline eliminated manual review and enabled continuous processing of new data.

Step 3: Engineered Classification Ontology

Rather than adopting generic labels, Whitefox built a hierarchical, domain-aligned classification structure, including:

Primary defect / feature classes

Secondary groupings based on risk or category

Explicit noise filtering classes

Confidence thresholds for model outputs

This ensured that machine predictions were interpretable and actionable by domain experts.

Step 4: Deterministic Scoring & Rating Engine

Detection alone does not equal intelligence.

Whitefox developed a custom rating engine that:

Converts model confidence into weighted scores

Applies engineered severity tables

Converts magnitude into 1–5 severity bands

Produces deterministic outputs for operational use

This means the AI outcomes were repeatable and defensible — not subjective.

Step 5: Validation & Continuous Improvement

We implemented a rigorous validation framework that:

Tracks performance by class (precision, recall)

Expands training data intelligently

Applies continuous retraining workflows

Tunes confidence thresholds for production use

This framework scaled from tens of thousands to hundreds of thousands of labelled examples while maintaining performance integrity. This validation framework incorporates best practices from Deep Dive into DeepSeek for Software Engineers, including structured evaluation routines and metric tracking that support continuous learning and model interpretability.

Step 6: Cloud-Native AI Deployment

AI at scale requires more than models — it needs infrastructure.

Whitefox designed and delivered:

Cloud inference clusters

Data transformation services

API integration layers

Secure, hosted environments

Monitoring and logs for production AI performance

This ensured the system operated reliably at scale without manual intervention.

Solution

Delivered: Production-Grade AI Systems

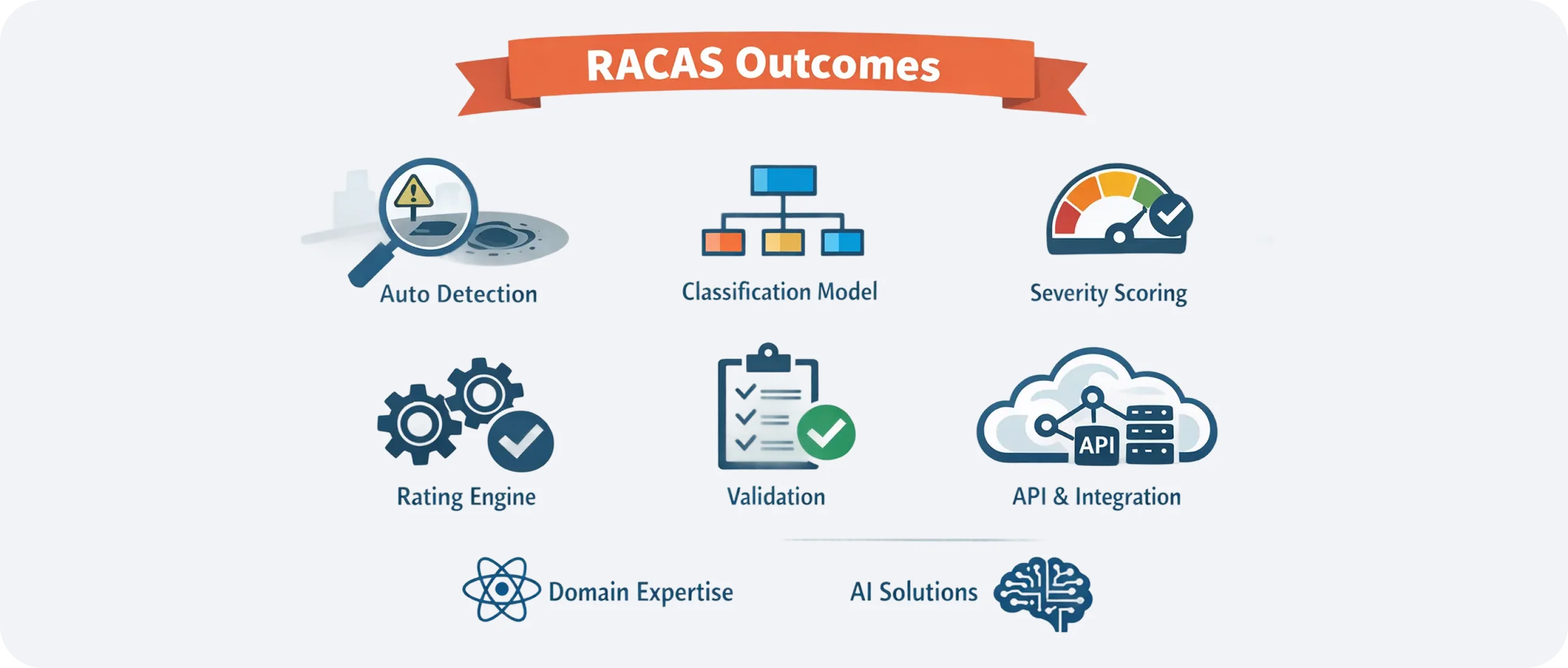

Whitefox delivered a complete, production-ready AI platform (not just isolated models) designed to operate reliably at enterprise scale. The system transforms unstructured inputs into structured operational intelligence, automatically detecting features and anomalies, assigning deterministic severity and condition scores, validating model performance, and integrating directly into RACAS through APIs. Built on cloud-native infrastructure, the platform scales without manual intervention and meets standards-driven enterprise requirements:

Converts unstructured data into structured intelligence

Detects features and anomalies with confidence scoring

Produces deterministic severity and condition scores

Validates model performance with engineering rigor

Integrates with operational platforms via APIs

Scales cloud-native without manual bottlenecks

Is ready for enterprise and standards-driven environments

This outcome is directly reusable for projects from other industries that require computer vision, predictive modelling, compliance systems, anomaly detection, geospatial analytics and more.

Before vs after

Business Outcome

Organisations that partner with Whitefox achieve measurable AI velocity increases:

Before Whitefox | After Whitefox |

|---|---|

Manual, inconsistent analysis | Automated, scalable inference |

Unstructured raw data | Structured, decision-ready outputs |

Prototype or pilot models | Production-grade AI systems |

Weak validation | Rigorous performance governance |

Ad-hoc infrastructure | Cloud-native, resilient pipelines |

Our work turns AI from an experiment into operational value.

The Impact

Key Outcomes from the RACAS Engagement

While the context was road asset management, the underlying AI engineering strategy is domain-agnostic.

Automated feature detection at scale

Domain-aligned classification ontology

Confidence-weighted severity scoring

Deterministic rating engines

Production validation framework

API and infrastructure integration

This reflects Whitefox’s deep AI engineering domain expertise, applicable across use cases and industries.

Architecture

Technical Highlights

Machine Learning & Computer Vision

Custom object detection pipelines

YOLO-derived architectures

Confidence calibration and filtering

Label hierarchy and ontology design

Model Governance

F1 performance tracking

Precision/recall balancing

Class-level error analysis

Continuous retraining workflows

AI Infrastructure

Cloud inference clusters (AWS, GCP)

Managed batch and streaming pipelines

API integration layers

Monitoring and logging at enterprise scale

Operational Integration

Export-ready structured data

Dashboard compatibility

API hooks into workflows

Compliance-ready outputs

Why us

Why Whitefox

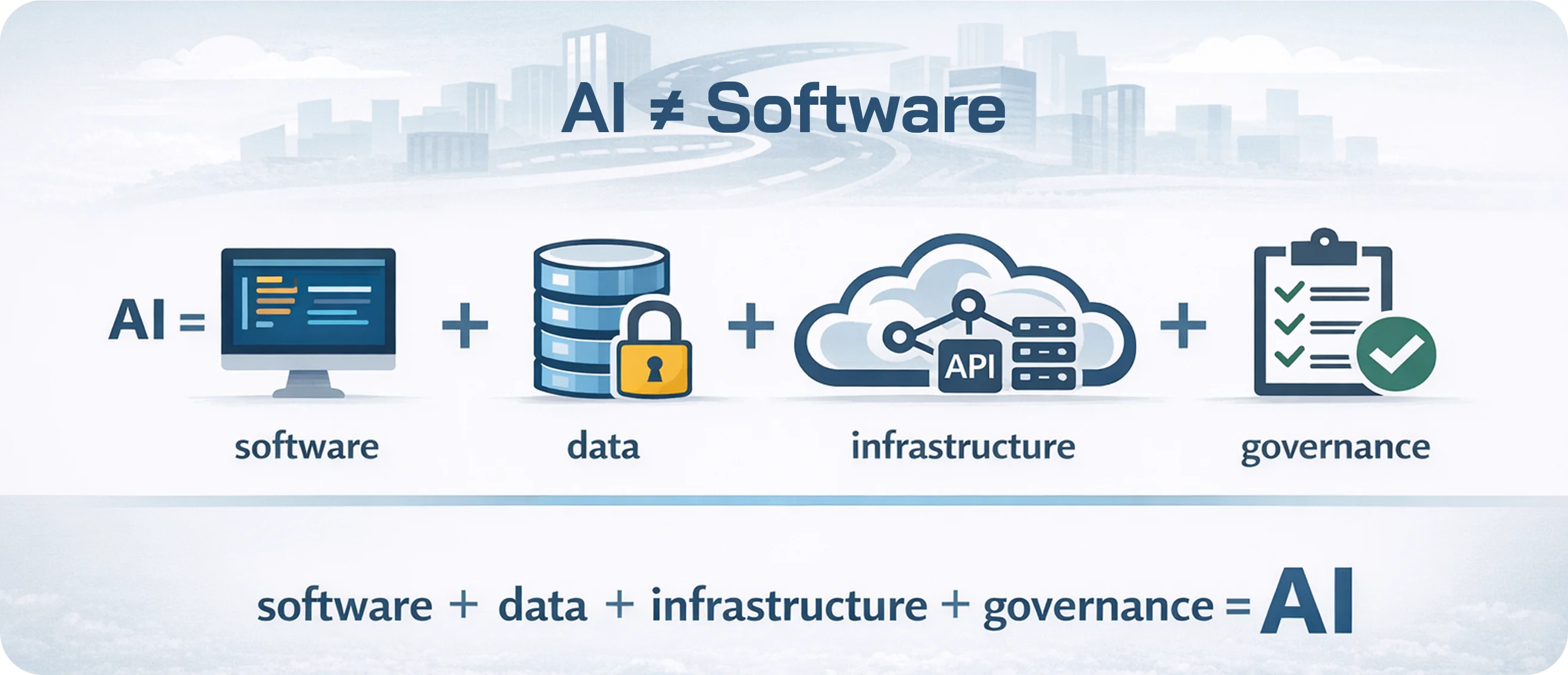

AI succeeds in production only when models, data, infrastructure, and governance are engineered as a single system. This systems-first approach builds on our intent-first product philosophy, where AI is designed around real operational decisions, not abstract model performance.

Whitefox approaches AI as a full-stack engineering discipline. We design robust pipelines that operate reliably in real environments, validation frameworks that scale with growing datasets, deterministic scoring engines aligned with business logic, and cloud-native infrastructure built for continuous operation. Our integration layers ensure AI outputs flow directly into operational workflows, not isolated dashboards.

This systems-first approach is why our work moves beyond experimentation. We don’t deliver standalone models: we architect production AI platforms organisations can depend on.

Conclusion

Partner with Whitefox

Production AI requires more than experimentation. It demands structured platform engineering, robust system architecture, deterministic logic, and infrastructure that performs under real-world conditions.

If your organisation is working with complex, unstructured data and needs reliable, scalable intelligence - Whitefox can architect and deliver the full production AI system. From machine learning pipelines and scoring engines to validation frameworks and cloud deployment, we design solutions that integrate seamlessly into operational environments.

From Prototype to Production AI

If you’re ready to move from prototypes to production AI, let’s discuss your AI system architecture and roadmap.